How Does S3 works with AEM ?

How Does S3 works with AEM ?

- Clustered, meaning all servers share a single bucket

- Stand-alone, wherein each server has its own bucket

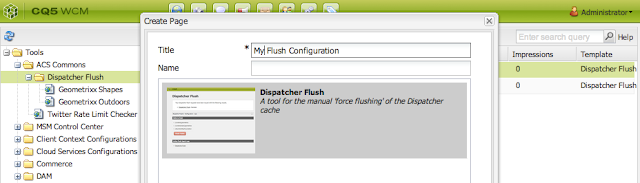

A good starting point for explaining how S3 works with AEM is the stand-alone method. The installation and configuration process is described on docs.adobe.com. A special feature pack is required to be installed. Once the necessary configuration changes have been made and AEM is started it will automatically start syncing all its binary data to the S3 storage bucket.

A number of important aspects are configurable:

- Size of the local cache

- Maximum number of threads to be used for uploading

- Minimum size of objects to be stored in S3

- Maximal size for binaries to be cached

Depending on the amount of assets that need to be migrated the initial import may take anywhere from several hours up to a few days. This process is can be speeded up by allowing many simultaneous uploads.

If the server has enough CPU power and network bandwidth this can make a great difference. Amazon S3 can easily handle 100 requests per second with bursts of up to 800 requests. So the available bandwidth and CPU power are the main factors at play during the migration process.

In the Amazon S3 buckets each asset is stored as a separate entry. The S3 connector uses unique node identifiers to keep track of each asset.

Once imported into S3 the data can be viewed via the AWS console:

Notice the unique identifiers. These are made up out an identifier of 36 characters and a prefix of 4 characters. This prefix is important for maintaining performance of the bucket's index because it ensures that key names are distributed evenly across index partitions. This is quite technical, but vital to the performance of an S3 bucket. The S3 connector will take care of all of this.

CLUSTERED S3 ARCHITECTURE

When storage size runs into the terabyte range a clustered S3 architecture becomes interesting: instead of having 3 buckets for 3 servers with a total of 15 TB it is also possible to share a single bucket. Think of it as the family bucket at KFC. This approach would save 2/3rds of the storage space. Next to saving space it also eliminates the need for replicating huge binary files such as videos between authors and publishers, this is called 'binary-less replication'.

HOW TO PLAN FOR MIGRATION TO S3

Before considering a move to S3 it is important to know the answers to the following questions:

- What is the number of assets that will be imported? What is the total size?

- What is the number of different renditions will be generated?

- How much space will the renditions take up?

- What is the expected growth for the upcoming years?

The number of assets to be imported will give some sense of the amount of time and work involved. If the number of assets is huge than it may be worthwhile to automate or script the import process.

In any case it is a good idea to separate the import into two stages:

- Importing the original files

- Generating renditions and extracting the XMP metadata.

Running the workflows for renditions and metadata after all files have been imported will ensure that these processes don't slow each other down. It is also a checkpoint: a good opportunity to create a backup.

Finally, as a rule of thumb: if total size equals or exceeds 500 GB a move to S3 is advisable. Similarly, if the repository is expected to grow larger than 500 GB or its growth cannot easily be accommodated on the current hosting platform than a move to S3 is also suggested.

MORE INFO?

Are you interested in ore details information leave a comment, will reach out to you..

Useful Links -

- https://experienceleague.adobe.com/docs/experience-manager-65/deploying/deploying/data-store-config.html

- https://adobe-consulting-services.github.io/acs-aem-commons/features/mcp-tools/asset-ingestion/s3-asset-ingestor/index.html

- https://www.aemrules.com/2022/05/how-to-configure-s3-in-aem.html

Your blog got me to learn a lot,thanks for sharing,nice article

ReplyDeleteAWS Online Course

Learn AWS Online

AWS Certification Online